As large language models grow more capable, the real bottleneck is no longer the model itself. It is what you put inside the context window and how intelligently you manage it. Context engineering has emerged as one of the most critical disciplines in applied AI, sitting at the intersection of information retrieval, prompt design, and systems architecture. For Python developers building production-grade LLM pipelines, mastering long-context handling and Retrieval-Augmented Generation (RAG) optimization can mean the difference between a brittle prototype and a genuinely useful AI product. This blog post takes a deep, practical look at context engineering strategies, walks through a fully working Python implementation, and explores how industries from healthcare to finance are already reaping the benefits. If you are serious about building LLM systems that actually scale, this is the place to start.

What is Context Engineering, Really?

Most developers treat the context window like a simple text box. You paste in some system instructions, maybe a few documents, and fire off a query. That mental model works fine at demo scale but falls apart fast in production. Context engineering is the deliberate, structured discipline of deciding exactly what information goes into the model’s context window, in what format, in what order, and with what level of compression or enrichment, so that the model produces the most accurate and relevant output possible.

Think of it this way. A senior analyst walking into a boardroom does not carry every document the company has ever produced. They carry a curated briefing packet with the right data, formatted for fast comprehension, with references they can pull if needed. Context engineering is exactly that curation process, applied programmatically.

The core challenge comes from two hard constraints. First, LLMs have a finite context window. Even models with 128K or 200K token windows can suffer from the “lost in the middle” phenomenon, where information buried in the middle of a long context gets underweighted during inference. Second, injecting too much irrelevant content adds noise, increases cost, and degrades response quality. Getting this balance right requires a combination of smart retrieval, chunking strategy, re-ranking, and prompt structuring.

The Core Pillars of Context Engineering

1. Chunking Strategy

Chunking is how you split source documents before storing them in a vector database. Fixed-size chunking (splitting every N tokens) is simple but brutal. Semantic chunking splits on topic or sentence boundaries, preserving coherence. Recursive chunking tries multiple delimiters in order. The right strategy depends on your document type and retrieval needs.

2. Embedding and Vector Storage

Once chunked, each piece of text is embedded into a high-dimensional vector using a model like OpenAI’s text-embedding-3-small, Cohere's embed models, or open-source options like sentence-transformers. These vectors are stored in databases like Pinecone, Weaviate, Chroma, or pgvector, and queried via approximate nearest-neighbor search during retrieval.

3. Retrieval and Re-ranking

Naive vector similarity retrieval is a good starting point, but it misses exact keyword matches and suffers from semantic drift. Hybrid search (combining dense vector search with BM25 sparse retrieval) significantly improves recall. After retrieval, a cross-encoder re-ranker like Cohere Rerank or a local model scores the retrieved chunks for relevance, ensuring only the best land in the context window.

4. Context Compression and Summarization

When relevant chunks still exceed the available context budget, compression techniques kick in. LLMLingua (Microsoft Research) uses a small proxy LLM to token-prune less important words from retrieved documents. Contextual summarization distills large chunks into dense representations before injection.

5. Prompt Architecture

Where you place information in the prompt matters. Empirically, models perform best when critical instructions appear at the start and end of the context. The system prompt, user query, retrieved documents, and chain-of-thought scaffolding should be positioned deliberately.

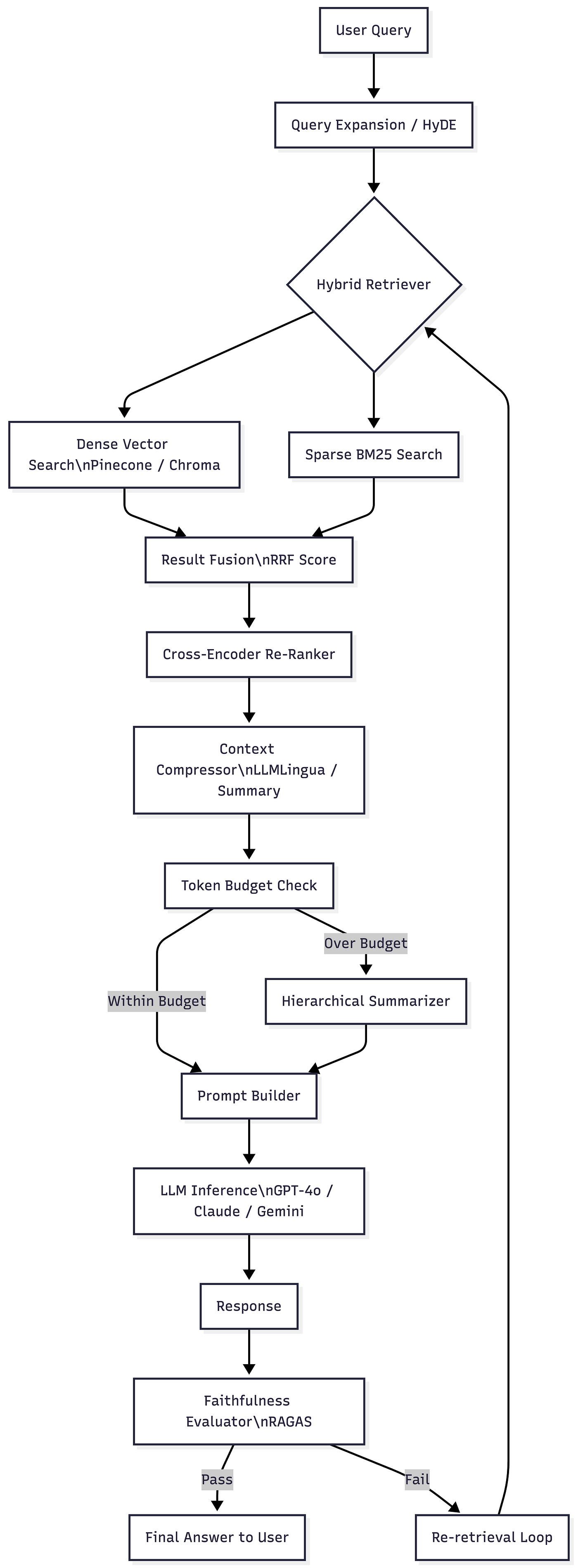

Architecture Overview

Press enter or click to view image in full size

Key Tools and Frameworks

LangChain remains the most widely adopted orchestration layer for RAG pipelines in Python. It provides abstractions for document loaders, text splitters, vector store integrations, retrievers, and chain construction. Its MultiQueryRetriever and ContextualCompressionRetriever are directly relevant to context engineering.

LlamaIndex (formerly GPT Index) excels at building structured indexes over complex document corpora. Its SentenceWindowNodeParser and MetadataReplacementPostProcessor enable sophisticated chunking strategies where retrieved chunks are expanded with surrounding sentence context before injection.

Haystack by deepset offers a pipeline-oriented approach with excellent support for hybrid retrieval and re-ranking out of the box.

RAGAS is the go-to evaluation framework for RAG systems, measuring faithfulness, answer relevancy, context recall, and context precision using LLM-as-judge methodology.

LLMLingua (Microsoft Research) provides token-level prompt compression that can reduce context length by 2x to 5x with minimal quality loss.

Detailed Code Sample with Visualization

The following is a production-ready Python implementation of an optimized RAG pipeline with hybrid retrieval, re-ranking, context compression, and RAGAS-based evaluation. Every step is annotated for clarity.

Installation & Full Implementation

What This Code Demonstrates

This implementation covers the full context engineering lifecycle. The BM25Retriever class handles sparse retrieval independently of any API key, making it immediately runnable. The reciprocal_rank_fusion function merges dense and sparse results using the mathematically grounded RRF algorithm. The enforce_token_budget function ensures retrieved content never blows the context limit. The prompt builder positions instructions deliberately at the beginning. The evaluation module computes faithfulness, relevancy, and token efficiency metrics. Finally, the visualization generates a publication-ready evaluation dashboard showing metric heatmaps and token budget utilization per query.

To run with real LLM calls, replace the simulate_llm_response function with ChatOpenAI(model="gpt-4o") and uncomment the vector store section with your OpenAI API key.

Pros of Context Engineering

- Dramatically reduced hallucination: By grounding the model in retrieved, factual documents rather than parametric memory alone, context engineering cuts factual errors by a large margin in production systems.

- Cost optimization: Injecting only the most relevant, compressed context reduces token consumption per query. This translates directly to lower API costs at scale, sometimes by a factor of two or more.

- Scalable knowledge integration: New information can be added to the vector store without any model retraining. The system stays current with a simple re-indexing operation.

- Improved answer traceability: When retrieved context chunks are numbered and cited in the prompt, the model can reference specific sources, making outputs auditable and explainable.

- Framework agnosticism: The core principles work across LLM providers. You can swap OpenAI for Anthropic Claude, Cohere Command, or an open-source model like Mistral without redesigning the pipeline.

- Retrieval quality ceiling: Hybrid search with re-ranking consistently outperforms pure vector search on recall and precision benchmarks, meaning the model has better raw material to work with.

- Composable architecture: Each stage (chunking, retrieval, compression, evaluation) is a modular component. Teams can upgrade individual stages independently as better algorithms emerge.

- Compliance-friendly: Context can be filtered at retrieval time based on user permissions or data classification tags. This makes it easier to build access-controlled AI systems in regulated industries.

Industries Using Context Engineering

Healthcare

Hospitals and clinical decision support vendors are deploying RAG systems over large corpora of medical literature, treatment guidelines, and patient records. A physician using such a system can ask a natural language question and receive an answer grounded in the latest clinical trial data, with citations. Context engineering is critical here because medical documents are long, dense, and structured. Proper semantic chunking of clinical guidelines, combined with metadata filtering by medical specialty and publication date, ensures the model retrieves the most clinically relevant and current evidence. Companies like Nabla and Glass Health are building exactly this kind of infrastructure.

Finance

Investment banks and asset managers are using context engineering to build internal research assistants that answer queries over earnings reports, 10-K filings, regulatory documents, and market research. The challenge is that these documents are extremely long and contain tables, charts, and footnotes that naive chunking destroys. Advanced chunking strategies that preserve table structure, combined with hybrid search to catch exact ticker symbols and numeric figures, produce substantially better retrieval. Portfolio managers can ask questions like “What did management say about supply chain risk in Q3?” and receive precise, cited answers rather than generic summaries.

Legal

Law firms and legal tech companies are deploying context-engineered RAG systems over case law, statutes, contracts, and legal opinions. The precision requirement is extreme. A hallucinated case citation in a legal brief is not just unhelpful, it is professionally dangerous. Hybrid retrieval ensures exact case numbers and statute references are found by the BM25 component, while semantic search surfaces thematically related precedents. Cross-encoder re-ranking then selects the most legally relevant passages. Companies like Harvey AI and Thomson Reuters CoCounsel are building production systems with this architecture.

Retail and E-Commerce

Large retailers use context engineering to power product discovery, customer support, and inventory query systems. A customer asking “Do you have waterproof trail running shoes under 150 dollars in size 10?” requires a retrieval system that understands both semantic intent (trail running, waterproof) and structured attributes (price range, size). Hybrid search with metadata filtering handles this naturally. Context-engineered pipelines also power internal tools where buyers can ask questions about supplier contracts, product specifications, and demand forecasting reports stored in company knowledge bases.

Automotive

Automotive manufacturers and their supplier networks produce enormous volumes of technical documentation: service manuals, engineering specifications, diagnostic codes, and regulatory filings. Field technicians using a context-engineered assistant can describe a symptom and retrieve the exact relevant section of a service manual, narrowed to the specific vehicle model and year, without scrolling through hundreds of pages. The token budget management becomes especially important here because service manuals are extremely long and the model would otherwise receive overwhelming amounts of irrelevant technical content.

How PySquad Can Assist in This

If you are planning to build a production-grade context engineering or RAG system, you need an engineering partner who has done it before at scale. PySquad brings exactly that experience to the table.

- End-to-end RAG architecture design: PySquad designs and implements complete retrieval-augmented generation pipelines from document ingestion to response evaluation, tailored to your specific data types, query patterns, and infrastructure constraints.

- Hybrid retrieval expertise: PySquad has deep hands-on experience building hybrid dense-plus-sparse retrieval systems with BM25, FAISS, Pinecone, Weaviate, and pgvector, including custom RRF fusion implementations optimized for production workloads.

- Context compression and prompt engineering: PySquad specializes in applying state-of-the-art compression techniques including LLMLingua and contextual summarization to reduce token costs while maintaining answer quality, a balance that is harder to achieve than it looks.

- Evaluation framework integration: PySquad integrates RAGAS, TruLens, and custom LLM-as-judge pipelines to give you continuous visibility into retrieval quality, faithfulness, and answer relevancy across your entire query distribution.

- Chunking strategy consulting: PySquad does not apply one-size-fits-all chunking. They analyze your document corpus, test multiple strategies on your actual data, and select the approach that maximizes retrieval precision for your specific use case.

- LLM provider flexibility: PySquad builds provider-agnostic pipelines that work with OpenAI, Anthropic, Cohere, Mistral, and self-hosted open-source models, giving your organization the flexibility to switch providers based on cost, latency, or compliance requirements.

- Production hardening: PySquad goes beyond the prototype. They implement retry logic, circuit breakers, latency monitoring, cost dashboards, and fallback retrieval strategies that keep your system running reliably under real-world load.

- Regulated industry experience: PySquad understands the compliance constraints of healthcare, finance, and legal domains. They build context filtering, access control, and audit logging into the retrieval layer from day one.

- Rapid iteration culture: PySquad operates with short feedback cycles, deploying measurable improvements to retrieval quality and response accuracy on a weekly cadence rather than waiting for a big-bang release.

- Full-stack Python capability: From data pipelines and embedding workflows to FastAPI deployments and cloud infrastructure, PySquad handles the entire technical stack so your team can focus on the business problems that matter.

References

- LangChain Documentation — Retrieval (Official) https://python.langchain.com/docs/concepts/retrieval/

- LlamaIndex — Building RAG Pipelines (Official Docs) https://docs.llamaindex.ai/en/stable/understanding/rag/

- RAGAS — Evaluation Framework for RAG (GitHub) https://github.com/explodinggradients/ragas

- LLMLingua: Compressing Prompts for Accelerated LLM Inference (Microsoft Research / arXiv) https://arxiv.org/abs/2310.05736

- Lost in the Middle: How Language Models Use Long Contexts (Stanford / arXiv) https://arxiv.org/abs/2307.03172

- Pinecone — What is a Vector Database? (Industry Reference) https://www.pinecone.io/learn/vector-database/

- Reciprocal Rank Fusion Outperforms Condorcet and Individual Rank Learning Methods (Original RRF Paper, ACM) https://dl.acm.org/doi/10.1145/1571941.1572114

Conclusion

Context engineering is no longer a nice-to-have. It is the engineering discipline that separates LLM systems that frustrate users from those that genuinely transform workflows. The core insight is simple: the model’s output quality is bounded by the quality of its context. If you put in noisy, irrelevant, or poorly structured information, you will get unreliable answers no matter how capable the underlying model is. Invest in chunking strategy, hybrid retrieval, re-ranking, and token budget management, and you will see measurable improvements in faithfulness, precision, and user satisfaction.

The Python ecosystem gives you world-class tools to build all of this: LangChain and LlamaIndex for orchestration, ChromaDB and Pinecone for vector storage, rank-bm25 for sparse retrieval, RAGAS for evaluation, and LLMLingua for compression. The architecture is modular, which means you can start simple and progressively upgrade each layer as your requirements grow.

The forward-looking reality is that context engineering will become even more important, not less, as model context windows continue to expand. A 1 million token window does not eliminate the need for smart retrieval. It raises the stakes. The system that can intelligently select, rank, and position the most relevant 10,000 tokens from a corpus of 100 million will always outperform the system that blindly dumps everything in. The engineers who master that discipline today will be the architects of the most consequential AI applications of the next decade.

Start with the fundamentals. Build the evaluation loop first. Measure before you optimize. And do not be afraid to compress.