The era of Physical AI is no longer a futuristic concept sitting on the pages of science fiction novels. It is happening right now, in warehouses, surgical suites, autonomous vehicles, and agricultural fields across the globe. Physical AI refers to the discipline of deploying intelligent algorithms into machines that operate in and interact with the real physical world. Python has emerged as the lingua franca for this domain, offering a rich ecosystem of tools spanning Robot Operating System (ROS), OpenAI Gym, PyBullet, and Stable-Baselines3. This blog post takes you on a complete technical journey from building and training a robotic agent inside a simulation environment to deploying it on real hardware. Whether you are a robotics engineer, an AI researcher, or a decision-maker evaluating automation investments, this guide will give you a firm grasp of how modern Physical AI pipelines are architected, trained, and shipped.

What is Physical AI?

Physical AI is the convergence of machine learning, control theory, computer vision, and mechanical engineering into systems that can perceive their environment, reason about it, and take physical actions. Unlike traditional software agents that live inside a browser or a cloud server, Physical AI agents operate under real-world constraints such as gravity, sensor noise, latency, and mechanical wear.

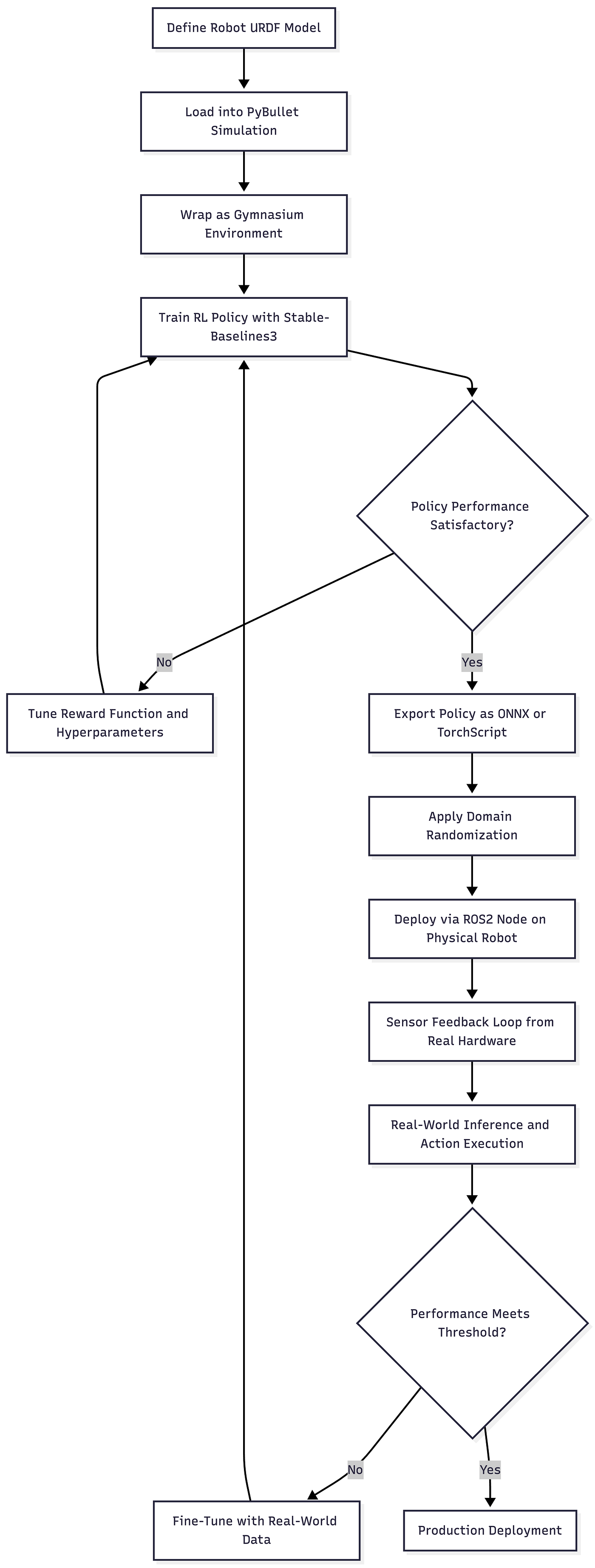

The typical Physical AI development lifecycle follows a sim-to-real pipeline. You train your agent in a simulated world because running thousands of training episodes on a physical robot is slow, expensive, and potentially dangerous. Once the agent achieves reliable performance in simulation, you apply a set of domain adaptation techniques and transfer the policy to the real robot.

Key Tools and Frameworks

Robot Operating System (ROS / ROS2)

ROS is the backbone of modern robotics software. It is a middleware layer that provides standardized communication between sensor nodes, actuator nodes, planning modules, and perception systems. ROS2, the modern successor, offers real-time support, DDS-based communication, and better security primitives. Python bindings via rclpy make it fully accessible to the Python developer community.

OpenAI Gym and Gymnasium

Gymnasium (the maintained fork of OpenAI Gym) provides a standardized environment interface for reinforcement learning. The step(), reset(), and render() API contract allows you to swap between environments without changing your training loop. Robotics-specific Gym environments include MuJoCo, PyBullet-Gym, and Isaac Gym from NVIDIA.

PyBullet

PyBullet is a physics simulation engine that supports rigid body dynamics, soft body simulation, and collision detection. It integrates seamlessly with Gym to create custom robot environments. You can load URDF (Unified Robot Description Format) files to simulate real robot models like the Franka Panda arm or a Boston Dynamics-style quadruped.

Stable-Baselines3 (SB3)

SB3 is the gold standard library for training RL agents in Python. It provides clean, tested implementations of PPO, SAC, TD3, A2C, and DDPG. Combined with Gymnasium and PyBullet, it forms a complete training stack for robotic control policies.

ROS-Gym Bridge

The gym_ros and ros2_gym packages allow your trained Gym-compatible policy to communicate with real robot hardware via ROS topics and services. This bridge is the critical link between the simulation world and physical deployment.

Architecture Overview

The Sim-to-Real Gap

The biggest challenge in Physical AI is the sim-to-real gap. Models trained in simulation encounter unexpected behavior in the real world due to differences in friction coefficients, sensor noise distributions, lighting conditions, and actuator dynamics. The standard mitigation strategies include domain randomization (randomly varying simulation parameters during training), system identification (calibrating simulation parameters to match real hardware), and adaptive control policies that can adjust to environmental shifts at inference time.

Detailed Code Sample with Visualization

The following code builds a complete pipeline for training a robotic arm (simulated as a simplified 2-DOF reacher) using PyBullet and Stable-Baselines3, then shows how to export and wrap the trained policy into a ROS2-compatible structure.

Step 1: Custom PyBullet Gymnasium Environment

Step 2: Training the Agent with Stable-Baselines3

Step 3: Exporting the Policy for Deployment

Step 4: ROS2 Inference Node

Step 5: Visualizing Training Performance

The chart produced by this script gives you two panels side by side. The left panel shows episode reward increasing and stabilizing as the agent learns to reach the target reliably. The right panel shows episode length decreasing over time, which means the agent is finding the goal faster as training progresses. Both together confirm that the policy is converging correctly before you commit to real-world deployment.

Pros of Physical AI with Python, ROS, and Gym

- Rapid Prototyping at Low Cost. Simulation environments like PyBullet and MuJoCo allow teams to run millions of training episodes at near-zero hardware cost, dramatically reducing the development cycle before any physical component is touched.

- Modular, Reusable Architecture. ROS2 nodes are independently deployable and replaceable. You can swap a camera driver, a planning module, or an inference node without rewiring the entire system.

- Massive Open-Source Ecosystem. The Python robotics ecosystem benefits from thousands of community-maintained packages, pre-trained URDF models, and reference implementations on GitHub that accelerate development significantly.

- Safety-First Training Paradigm. Training in simulation first protects expensive hardware from damage during the exploration phase of reinforcement learning, where agents frequently take suboptimal or random actions.

- Standardized RL Interface via Gymnasium. The Gym API ensures that any trained agent can be tested across dozens of environments with zero code changes, making it easier to benchmark and compare policies.

- Scalable Infrastructure. Vectorized training across multiple CPU or GPU-backed simulation instances scales linearly with compute resources, enabling faster experiments and better final policies.

- Cross-Platform Portability. Policies exported as ONNX or TorchScript can be deployed on edge hardware, including ARM-based embedded systems, NVIDIA Jetson boards, and custom FPGAs, without dependency on full Python environments.

- Strong Domain Randomization Support. Python’s flexibility makes it easy to inject randomness into simulation parameters such as friction, mass, lighting, and sensor noise, which directly improves real-world robustness.

Industries Using Physical AI with ROS and Python

Healthcare and Surgical Robotics

Companies like Intuitive Surgical and Medtronic are integrating AI-driven motion planning into robotic surgery platforms. Python-based simulation pipelines allow surgeons and engineers to pre-validate robotic trajectories in virtual anatomical models before any procedure. Reinforcement learning policies trained in simulation are increasingly being evaluated for tasks like tissue retraction, suture assistance, and instrument navigation in minimally invasive surgeries.

Automotive and Autonomous Vehicles

Self-driving companies, including Waymo, Cruise, and Mobileye, use simulation-first pipelines extensively. While their stacks often rely on proprietary simulators, the principles are identical: train perception and planning models in simulation, validate in structured testing environments, then deploy on physical vehicles. Python, ROS2, and custom Gym environments are standard tools in their data-to-deployment pipelines.

Logistics and Warehousing

Amazon Robotics, Ocado, and Mujin use robotic pick-and-place systems that are trained using reinforcement learning in simulated warehouse environments. Tasks such as grasping irregularly shaped items, navigating dynamic shelving environments, and coordinating multi-robot fleets benefit enormously from sim-to-real RL pipelines built in Python.

Agriculture and Precision Farming

Startups like Carbon Robotics and FarmWise deploy intelligent field robots that identify and eliminate weeds using computer vision and precise actuator control. These robots are trained in simulated field environments and deployed on real agricultural machinery. ROS2 handles the real-time sensor fusion between LIDAR, GPS, and vision systems while Python models drive decision-making.

Manufacturing and Quality Control

ABB, FANUC, and Universal Robots integrate adaptive control policies trained with RL into their industrial arms. Tasks like adaptive welding path planning, assembly force control, and visual defect detection benefit from policies trained in simulation and deployed via ROS2 interfaces on real factory floors. Python-based vision pipelines using OpenCV and torchvision operate in the same ROS2 node graph as the control systems.

Defense and Search and Rescue

Autonomous UAVs and ground robots used in disaster response scenarios rely on sim-to-real training to learn navigation in rubble, unstable terrain, and GPS-denied environments. ROS2 provides the communication layer between onboard sensors, the planning stack, and the human operator interface, while Python-based RL policies govern local navigation decisions.

How PySquad Can Assist in This

Physical AI is a demanding discipline that requires deep expertise across reinforcement learning, robotics middleware, systems engineering, and hardware integration. PySquad brings all of these capabilities together under one roof, giving organizations a reliable partner to navigate the full journey from concept to deployment.

- PySquad has built custom ROS2 node architectures for clients across logistics, manufacturing, and healthcare sectors, ensuring that simulation-trained policies translate cleanly to real hardware control loops with minimal performance degradation.

- PySquad specializes in Gymnasium environment design from scratch. Whether you need a custom URDF robot model, a domain-randomized training curriculum, or a multi-agent coordination environment, PySquad engineers design environments tailored to your exact robot hardware and task requirements.

- PySquad has hands-on experience with Stable-Baselines3, RLlib, and CleanRL for training continuous control policies. PySquad knows which algorithm to use for which task type and how to tune hyperparameters efficiently to reduce your compute budget without sacrificing policy quality.

- PySquad handles the full sim-to-real pipeline. From PyBullet simulation setup and URDF calibration to TorchScript export and edge deployment on Jetson-class hardware, PySquad manages every step of the transition.

- PySquad builds robust domain randomization strategies that bridge the sim-to-real gap proactively. Rather than discovering deployment failures after the fact, PySquad stress-tests policies against a wide distribution of environmental parameters before any physical rollout begins.

- PySquad integrates Physical AI pipelines with enterprise data systems. Whether your team needs a ROS2 fleet management dashboard, a real-time telemetry pipeline, or a cloud-based training orchestration system, PySquad connects the robotics layer with your broader technology infrastructure.

- PySquad’s safety-first engineering culture means that every deployment checklist includes hardware-in-the-loop testing, velocity limit validation, emergency stop protocols, and fail-safe recovery behaviors before any robot is authorized to operate autonomously near humans.

- PySquad offers end-to-end project delivery from requirements scoping through prototyping, iteration, deployment, and ongoing support. PySquad does not hand you a trained model and walk away. PySquad stays engaged through integration, monitoring, and the inevitable edge cases that arise in production environments.

- PySquad’s team of Python specialists and ML engineers means you get cross-disciplinary expertise in a single engagement. PySquad eliminates the coordination overhead of working with multiple specialist vendors.

- Organizations that partner with PySquad gain not only a delivered solution but also internal knowledge transfer, documented codebases, and trained internal teams. PySquad believes that empowering your engineers is as important as shipping the software.

References

- ROS2 Official Documentation and Tutorials

The primary reference for all ROS2 concepts, node architecture, DDS communication, and Python bindings viarclpy. - Farama Foundation Gymnasium Documentation

Official documentation for the Gymnasium RL environment interface, environment wrappers, and the full catalog of built-in and community environments. - Stable-Baselines3 Documentation and GitHub Repository

Comprehensive documentation for SB3 algorithms, custom policy networks, vectorized environments, callbacks, and export utilities. - PyBullet Physics Engine Documentation

Reference guide for PyBullet simulation, URDF loading, joint control modes, and collision detection APIs. - OpenAI Spinning Up: Introduction to RL

A foundational resource for understanding the RL algorithms (PPO, SAC, TD3) used in robotic control policy training. - NVIDIA Isaac Gym: Technical Report

Whitepaper covering GPU-accelerated robot learning environments and their applications in sim-to-real transfer at scale.

Conclusion

Physical AI represents one of the most consequential intersections of software and the physical world that our industry has ever attempted. The combination of Python’s accessible syntax and rich ecosystem, ROS2’s battle-tested robotics middleware, and Gymnasium’s standardized RL interface gives practitioners a genuinely capable toolkit for building intelligent machines that operate outside of server racks and into the real world.

The pipeline we walked through in this post covers the full arc of a real Physical AI project: designing a custom simulation environment, training a reinforcement learning policy with Stable-Baselines3, exporting that policy for deployment, wiring it into a ROS2 node that communicates with real hardware, and visualizing training progress to validate convergence. Each of these steps has its own depth, and each one matters for whether your agent succeeds or fails when it finally meets the unpredictable real world.